After anki trashed my history, uploaded the trashed history to the sync server, and then repeatedly re-synced the trashed history every time I tried to restore from backup, I wrote my own spaced repetition app. It reads input from a markdown file, asks questions in the terminal and stores state in a json file. I wrote those few hundred lines of code in an afternoon and I've been using it ever since.

I wanted to run it on my android phone too, but after several days of struggling and several gigabytes of IDE downloads I lost interest.

This is my typical experience. Extending and customizing my laptop is a routine affair. Extending and customizing my phone is so painful that I give up every time. The software on my laptop feels like a comfortable old boot, worn in over years of tweaking and scripting. The software on my phone feels like a slot machine that moves all the buttons around once a month and holds my eyes open while the ads play. It's shiny and polished, but it isn't on my side.

The pinephone is a $150 mobile phone that aims to be able to run mainline linux. I bought one hoping to create an experience more like the experience of using my laptop.

Obviously writing an entire mobile suite is a big project, so I'm relentlessly cutting corners wherever possible and adopting an aesthetic of simplicity > capability.

I just finished the first milestone - porting my little spaced repetition app.

Installing an operating system

I installed mobile-nixos. The docs at the time were very barebones but samueldr was kind enough to walk me through it over irc and I wrote down the process here.

I haven't gotten around to writing a system configuration yet, but once I do it will be a single-click deploy with nixops deploy -d focus - a big improvement over the LineageOS update process. The default configuration looks like this.

Cross-compiling the dependencies

Since the phone is running a normal linux userland it is possible to compile directly on the phone. But my laptop compiles things ~10x faster so it seemed worth investing in cross-compilation.

The first step is getting hold of arm64 versions of all my dependencies. This is done in shell.nix.

I pin the version of nixpkgs to make sure that both local and remote development use the exact same version of each dependency.

nixpkgs = builtins.fetchTarball {

name = "nixos-20.03";

url = "https://github.com/NixOS/nixpkgs/archive/20.03.tar.gz";

sha256 = "0182ys095dfx02vl2a20j1hz92dx3mfgz2a6fhn31bqlp1wa8hlq";

};

Nix recently gained support for cross-compilation but many packages don't cross-compile successfully yet. So the trick is to setup for cross-compilation, but grab some packages from the native arm64 repo. These won't build from source on a non-arm64 machine but there are pre-built version available in the nixpkgs binary cache.

armPkgs = import nixpkgs {

system = "aarch64-linux";

};

crossPkgs = import nixpkgs {

crossSystem = hostPkgs.lib.systems.examples.aarch64-multiplatform;

overlays = [(self: super: {

inherit (armPkgs)

gcc

mesa

libGL

SDL2

;

})];

};

Most config is shared between local and cross builds, so shell.nix takes a boolean argument that tells it which we're attempting.

targetPkgs = if cross then crossPkgs else hostPkgs;

We need pkgconfig and patchelf runnable on the host machine because they are used during compilation. And we need libGL and SDL2 runnable on the target machine to link against.

buildInputs = [

hostPkgs.pkg-config

hostPkgs.patchelf

targetPkgs.libGL.all

targetPkgs.SDL2.all

];

Now we can do nix-shell to drop into a shell setup for local compilation, or nix-shell --arg cross true to drop into a shell setup for cross compilation.

Cross-compiling my code

I wrote everything in zig because I'm trying to keep the whole system small, simple and fast. Zig is a small, simple, fast language that also cares a lot about cross-compilation.

The build.zig is mostly self-explanatory. Cross-compiling with zig takes almost no effort.

The one hitch is that it struggled to find headers using pkgconfig when cross-compiling. I haven't tried to debug this - just passed them directly instead.

In shell.nix:

NIX_LIBGL_DEV=targetPkgs.libGL.dev;

NIX_SDL2_DEV=targetPkgs.SDL2.dev;

In build.zig:

...

try includeNix(exe, "NIX_LIBGL_DEV");

try includeNix(exe, "NIX_SDL2_DEV");

...

fn includeNix(exe: *std.build.LibExeObjStep, env_var: []const u8) !void {

var buf = std.ArrayList(u8).init(allocator);

defer buf.deinit();

try buf.appendSlice(std.os.getenv(env_var).?);

try buf.appendSlice("/include");

exe.addIncludeDir(buf.items);

}

Zig also didn't set paths correctly in the resulting binary but this is reasonable - there is no way for it to know that I'm cross-compiling to an identical nix system so it defaults to some reasonable heuristics. I just patch the binary after compilation.

In shell.nix:

NIX_GCC=targetPkgs.gcc;

NIX_LIBGL_LIB=targetPkgs.libGL;

NIX_SDL2_LIB=targetPkgs.SDL2;

In sync:

patchelf --set-interpreter $(cat $NIX_GCC/nix-support/dynamic-linker) zig-cache/focus-cross

patchelf --set-rpath $NIX_LIBGL_LIB/lib:$NIX_SDL2_LIB/lib zig-cache/focus-cross

scp ./zig-cache/focus-cross $FOCUS:/home/jamie/focus

The build process is then either zig build local or zig build cross && ./sync.

Since both laptop and phone are running the same operating system with the same pinned dependencies I don't bother to use a virtual machine for local development. I can just mock out the device sensors in code and everything else will run the same.

Writing a simple GUI library

I'm writing an immediate-mode GUI library because I find them much simpler than most retained systems, in terms of both lines of code and mental overhead. Our Machinery recently gave a GDC talk that lays out the rationale.

Battery usage is often given as a concern, but on my laptop this app hovers around 1% cpu at all times whereas gnome-calculator reaches 20-30% whenever I press buttons. If this becomes a problem with more complex UIs I can add some caching at the draw command layer.

There are definitely some things that may be difficult to do well in immediate-mode, like complex reactive layouts, but luckily they aren't things that I care about doing on my phone.

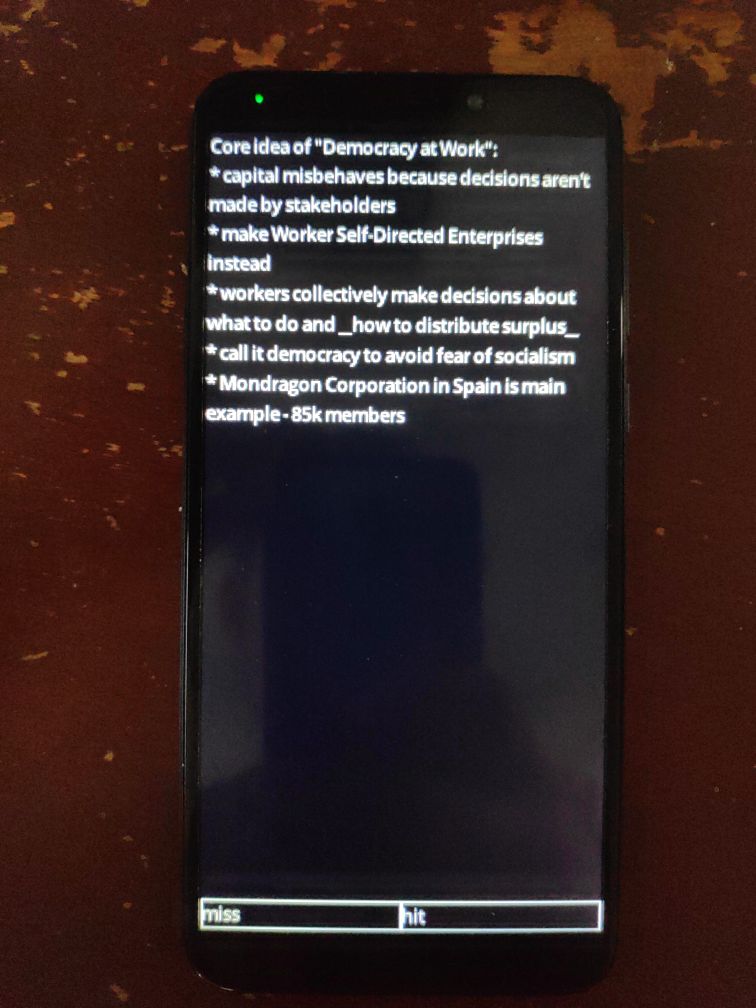

I copied my renderer and font atlas from microui and I've built just enough UI library to get things working. Here is the entire UI for the spaced repetition app:

if (ui.key orelse 0 == 'q') {

try saveLogs(self.logs.items);

std.os.exit(0);

}

const white = UI.Color{ .r = 255, .g = 255, .b = 255, .a = 255 };

var text_rect = rect;

var button_rect = text_rect.splitBottom(atlas.text_height);

switch (self.state) {

.Prepare => {

try ui.text(text_rect, white, try format(allocator, "{} pending", .{self.queue.len}));

if (try ui.button(button_rect, white, "go")) {

self.state = .Prompt;

}

},

.Prompt => {

const next = self.queue[0];

const text = try format(

allocator,

"{}\n\n(urgency={}, interval={})",

.{

next.cloze.renders[next.state.render_ix],

next.state.urgency,

next.state.interval_ns

}

);

try ui.text(text_rect, white, text);

if (try ui.button(button_rect, white, "show")) {

self.state = .Reveal;

}

},

.Reveal => {

const next = self.queue[0];

try ui.text(rect, white, try format(allocator, "{}", .{next.cloze.text}));

var event_o: ?Log.Event = null;

var miss_rect = button_rect;

var hit_rect = miss_rect.splitRight(@divTrunc(miss_rect.w, 2));

if (try ui.button(miss_rect, white, "miss")) {

event_o = .Miss;

}

if (try ui.button(hit_rect, white, "hit")) {

event_o = .Hit;

}

if (event_o) |event| {

try self.logs.append(.{

.at_ns = std.time.milliTimestamp() * 1_000_000,

.cloze_text = next.cloze.text,

.render_ix = next.state.render_ix,

.event = event,

});

self.queue = self.queue[1..];

if (self.queue.len == 0) {

self.queue = try sortByUrgency(&self.frame_arena, self.clozes, self.logs.items);

self.state = .Prepare;

} else {

self.state = .Prompt;

}

}

},

}

I really like that it's just straight code - not split across multiple files or classes or callbacks. You can just read it from top to bottom. If I want to abstract over some component or layout I can just put it in a function.

Obviously it needs a lot of work to improve anti-aliasing, fonts, layout etc, but I think those can be done without complicating the interface above.